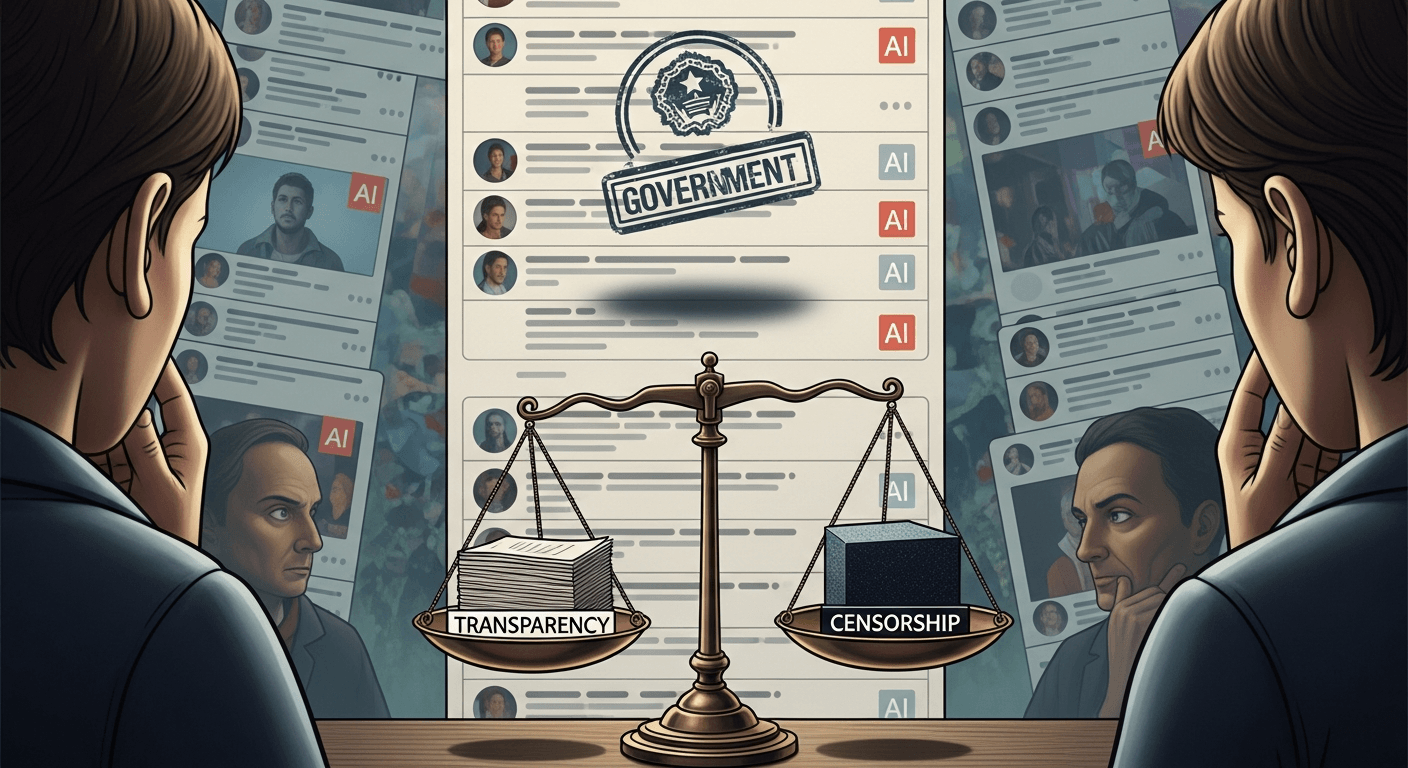

Why a label mandate matters — and why it may hurt more than help

I welcome the spirit behind the government's proposed rule: content that is generated or materially assisted by artificial intelligence should carry a clear label. The objective — to increase transparency and curb the spread of easy-to-produce misinformation — is intuitive and, on its face, sensible. But policies that sound simple on paper often collide with messy human behaviour, platform economics, and technical ambiguity. In past posts I've argued for thoughtful, risk-based AI governance (Regulating Artificial Intelligence; India Dataset Platform). That continuity matters here: labelling is one tool in a larger toolkit, not a silver bullet.

What the mandate requires

- Clear, conspicuous labels on content that is “AI-generated” or “AI-assisted.”

- A compliance regime requiring platforms to detect, display, and sometimes store metadata about such content.

- Potential penalties for non-compliance and expectations for platform reporting.

Translated into practice, social posts, images, deepfakes, and even news summaries might need an “AI-produced” badge. Platforms will be expected to implement automated detection and user-facing UI to surface those badges.

Likely aims and potential benefits

- Transparency: Let users know when content came from models rather than humans.

- Misinformation control: Reduce the spread of synthetic text, images, and audio that can mimic real actors.

- Accountability: Push platforms and creators to take responsibility for what they publish.

If implemented well, labelling could act like nutrition facts on a package — a quick cue for users to apply extra scrutiny.

Unintended consequences — a closer look

Policy metaphors help: imagine labelling as a lighthouse. It can show rocks ahead, but it cannot fix a ship’s steering system. Here are the ways the light can mislead.

Chilling effect on user engagement

If every creative use of assistive AI earns a heavy-handed badge, casual creators may stop experimenting. Small businesses and independent journalists who rely on generative tools to speed workflows could feel penalised.

Burden on platforms, especially smaller ones

Large companies can build detection engines and compliance pipelines; startups and regional apps may not. The rule risks entrenching incumbents who can absorb compliance costs.

Ambiguity for mixed content

How do you label a tweet where a human drafts ideas, an AI suggests phrasing, and the human edits heavily? A binary label (AI or not) collapses a spectrum of assistance into a blunt instrument.

Adversarial labelling and evasion

Bad actors can deliberately mislabel content to hide manipulation or spoof labels to add false credibility. Automated detectors themselves can be gamed by prompt engineering or minor transformations.

False sense of security

Users may see a label and assume they’re safe — ignoring harmful content that is human-originated, or worse, trusting labelled content as ‘checked’ when it is not.

Global regulatory divergence

Different jurisdictions will implement different thresholds and definitions. Content that is legal to post without a label in one country may be prohibited or labelled in another, fragmenting global platforms and creating compliance nightmares.

Impacts on creators, journalism, and platforms

Creators

- Micro-creators and freelancers often use AI to polish headlines, transcribe interviews, or draft social copy. Heavy-handed labelling may stigmatise productivity tools and reduce monetisation opportunities.

Journalism

- Newsrooms increasingly use AI for transcription, translation, and summarisation. Mandatory labels on every AI-assisted paragraph would be impractical and could imply the journalist didn’t verify facts, undermining trust.

Platforms

- Technical costs: detection models, metadata stores, appeal workflows, and audits.

- Operational costs: human review teams, legal counsel, and regulatory reporting.

- UX trade-offs: intrusive badges can degrade the experience; subtle indicators may be ignored.

Legal and enforcement challenges

- Definitional gaps: What is “AI-assisted”? Is a spell-checker AI? What about auto-suggest?

- Attribution problems: Provenance metadata can be stripped, lost, or forged across reposts.

- Cross-border enforcement: Who polices platforms headquartered abroad? How will enforcement scale to billions of pieces of content?

- Overreach risk: Enforcement that focuses on technicalities rather than harm may punish benign uses while letting harmful actors slip through.

Practical recommendations — design the mandate for reality

Adopt practical thresholds: Require labels when generative models produce more than a defined percentage of the final content, or when a model creates original media (images, audio, video) rather than minor edits.

Use graduated labels: Instead of binary tags, provide graduated indicators: “Human-created,” “Human-edited AI draft,” “AI-generated.” This reflects the spectrum and guides user expectations.

Standardise definitions and metadata formats: A common schema for provenance will help platforms interoperate and auditors to check compliance.

Independent audits and transparency reports: Regular third-party audits of detection systems and random samples of labelled content will build public trust.

UX-first labelling design: Work with designers to make labels informative but not punitive — e.g., tooltips that explain what “AI-assisted” means and offer quick ways to see the original prompt or revision history for journalism.

Deterrents for bad actors: Implement penalties for deliberate mislabelling and invest in forensic capabilities to trace tampering, coupled with takedown processes for high-harm content.

International coordination: Align with major jurisdictions to avoid fragmentation; lean on multilateral bodies and industry consortia to harmonise definitions and technical standards.

Conclusion — labels are necessary, but not sufficient

A labelling mandate is an important policy lever, but it must be wielded with nuance. Labels can light a path toward better public literacy, but they can also create fog if poorly defined or poorly implemented. My long-standing call for measured, risk-based AI governance applies here: combine labels with standards, audits, good UX, and international cooperation so transparency genuinely helps people, rather than becoming a checkbox that reassures no one.

Regards,

Hemen Parekh

Any questions / doubts / clarifications regarding this blog? Just ask (by typing or talking) my Virtual Avatar on the website embedded below. Then "Share" that to your friend on WhatsApp.

Get correct answer to any question asked by Shri Amitabh Bachchan on Kaun Banega Crorepati, faster than any contestant

Hello Candidates :

- For UPSC – IAS – IPS – IFS etc., exams, you must prepare to answer, essay type questions which test your General Knowledge / Sensitivity of current events

- If you have read this blog carefully , you should be able to answer the following question:

- Need help ? No problem . Following are two AI AGENTS where we have PRE-LOADED this question in their respective Question Boxes . All that you have to do is just click SUBMIT

- www.HemenParekh.ai { a SLM , powered by my own Digital Content of more than 50,000 + documents, written by me over past 60 years of my professional career }

- www.IndiaAGI.ai { a consortium of 3 LLMs which debate and deliver a CONSENSUS answer – and each gives its own answer as well ! }

- It is up to you to decide which answer is more comprehensive / nuanced ( For sheer amazement, click both SUBMIT buttons quickly, one after another ) Then share any answer with yourself / your friends ( using WhatsApp / Email ). Nothing stops you from submitting ( just copy / paste from your resource ), all those questions from last year’s UPSC exam paper as well !

- May be there are other online resources which too provide you answers to UPSC “ General Knowledge “ questions but only I provide you in 26 languages !

No comments:

Post a Comment