Why governments say they are "keeping options open"

I follow policy debates closely, and I often find myself reflecting on why governments — confronted with rapid advances in artificial intelligence — prefer to keep their regulatory options open rather than immediately locking in a single approach.

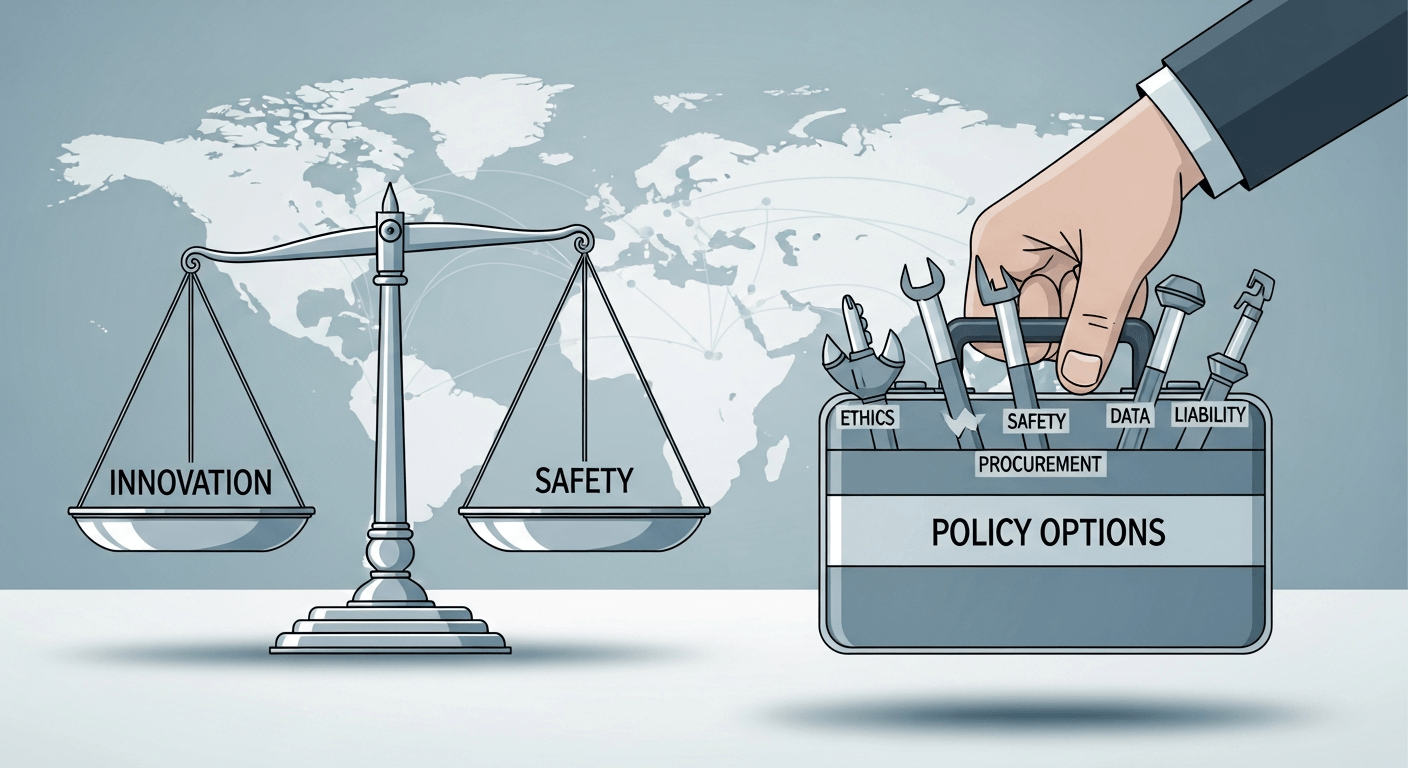

At a basic level, governments face uncertainty about the technology’s trajectory, its risks, and its benefits. Immediate, rigid laws can quickly become obsolete. Governments therefore balance three competing impulses: protect citizens and public goods, preserve room for innovation, and maintain political and legal legitimacy. That balance explains the repeated refrain you hear from policymakers: we are watching, we are learning, and we will legislate when the evidence suggests a clear path forward.

I have written about the tension between voluntary compliance and formal rules in earlier posts where I argued for active engagement and readiness to regulate as the technology matures How to regulate AI ? Let it decide for itself ?. More recently I discussed how EU-style due diligence and authorisation ideas are reshaping expectations for firms deploying advanced models Algorithmic Rider in Draft Data Law Trips Firms Deploying AI.

Policy tools governments keep on the shelf

Below are the main levers a government can use — either individually or in combination — when it decides to act.

- Bias and ethics rules: mandatory requirements for fairness testing, explainability thresholds, or prohibited uses for specific applications (e.g., certain predictive policing tools).

- Safety and performance standards: technical standards, robustness testing, red-team exercises, and third‑party audits to validate model behavior under stress.

- Sector-specific regulation: bespoke rules for healthcare, finance, transport, education, and safety‑critical systems where risks and incentives differ.

- R&D funding and public labs: direct investment in public research, open models, and shared evaluation resources to lower asymmetric capabilities.

- Procurement rules: using public purchasing power to set minimum standards and steer markets toward safer, auditable systems.

- Data governance: rules on data quality, consent, access, portability, and restrictions on high‑risk training datasets.

- Liability frameworks: clarifying who is responsible when AI causes harm — developers, deployers, or operators — and under what circumstances.

Each of these tools addresses different harms and incentives. The art of regulation is selecting the right mix at the right time.

Trade-offs to weigh

Every policy choice involves trade-offs:

- Rigid rules can reduce harms quickly but may stifle innovation or shift activity to other jurisdictions.

- Voluntary codes and standards can encourage adoption of best practices but often lack enforcement teeth.

- Sectoral rules allow precision but increase complexity and compliance costs for multi‑use systems.

- Broad, technology‑neutral principles are future‑proof but sometimes lack the operational detail needed for high‑risk uses.

Policymakers therefore often adopt a layered approach: general principles plus sector‑specific mandates for the riskiest uses.

Stakeholder perspectives

- Industry: Firms typically prefer flexible, technology‑neutral frameworks, clear compliance corridors, and recognition for voluntary certification. They warn that heavy-handed rules could harm competitiveness and slow beneficial deployments.

- Civil society: Advocates push for strong safeguards on privacy, nondiscrimination, transparency, and meaningful accountability. They often demand independent audits and redress mechanisms for affected individuals.

- Researchers: Academics and lab teams urge a balanced approach that protects open scientific inquiry while limiting misuse — for example through staged access, model cards, and reproducible evaluation.

As someone who watches these conversations, I believe constructive engagement across these groups speeds better policy outcomes. That’s why I’ve consistently called for proactive dialogue between government, industry, and civil society in my prior writing How to regulate AI ? Let it decide for itself ?.

Timeline considerations

Not all regulation needs to be immediate. A practical timeline often looks like this:

- Near term (months): voluntary codes, guidance, and targeted enforcement of existing laws (consumer protection, safety, anti‑discrimination).

- Medium term (1–3 years): adopt standards, certification schemes, and sectoral rules where risks are clear.

- Long term (3+ years): comprehensive legislation that integrates lessons from pilots, international norms, and matured technologies.

Governments keep options open to monitor incidents, learn from pilot programs, and coordinate internationally before enacting binding laws.

The need for international coordination

AI is global. Divergent national rules create fragmentation, compliance complexity, and regulatory arbitrage. Coordinated approaches — whether through multilateral institutions, standards bodies, or bilateral accords — help harmonise expectations on safety, data flows, and liability. My reading of recent developments suggests the EU’s assertiveness and parallel initiatives elsewhere are nudging global norms, making coordination both harder and more necessary Algorithmic Rider in Draft Data Law Trips Firms Deploying AI.

Recommended principles for future legislation

If governments decide to legislate, I believe laws should reflect these core principles:

- Flexibility: allow rules to adapt as technology and evidence evolve.

- Technology‑neutrality: focus on outcomes and risk rather than specific design choices.

- Transparency: require meaningful disclosure about capabilities, limits, and evaluation methods.

- Accountability: allocate responsibilities and ensure accessible remedies for harms.

- Risk‑based approach: calibrate obligations to the potential impact of the AI use-case, with stricter guardrails for higher risk scenarios.

These principles reduce the chance of brittle laws that either overreach or under‑protect.

Closing reflections and a call to action

Keeping options open is not the same as inaction. It should mean purposeful observation, piloting, standards building, and engagement — so that when legislation is needed, it is proportionate, evidence‑based, and internationally coherent.

If you care about how AI will shape public life, get involved: read draft guidance, join public consultations, and ask questions of vendors and institutions that deploy AI. Public participation improves policy.

Call to action: tell your local representatives that you support a measured, risk‑based approach to AI that protects people without freezing beneficial innovation.

Regards,

Hemen Parekh

Any questions / doubts / clarifications regarding this blog? Just ask (by typing or talking) my Virtual Avatar on the website embedded below. Then "Share" that to your friend on WhatsApp.

Get correct answer to any question asked by Shri Amitabh Bachchan on Kaun Banega Crorepati, faster than any contestant

Hello Candidates :

- For UPSC – IAS – IPS – IFS etc., exams, you must prepare to answer, essay type questions which test your General Knowledge / Sensitivity of current events

- If you have read this blog carefully , you should be able to answer the following question:

- Need help ? No problem . Following are two AI AGENTS where we have PRE-LOADED this question in their respective Question Boxes . All that you have to do is just click SUBMIT

- www.HemenParekh.ai { a SLM , powered by my own Digital Content of more than 50,000 + documents, written by me over past 60 years of my professional career }

- www.IndiaAGI.ai { a consortium of 3 LLMs which debate and deliver a CONSENSUS answer – and each gives its own answer as well ! }

- It is up to you to decide which answer is more comprehensive / nuanced ( For sheer amazement, click both SUBMIT buttons quickly, one after another ) Then share any answer with yourself / your friends ( using WhatsApp / Email ). Nothing stops you from submitting ( just copy / paste from your resource ), all those questions from last year’s UPSC exam paper as well !

- May be there are other online resources which too provide you answers to UPSC “ General Knowledge “ questions but only I provide you in 26 languages !

No comments:

Post a Comment