When a startup says “no” to the Pentagon

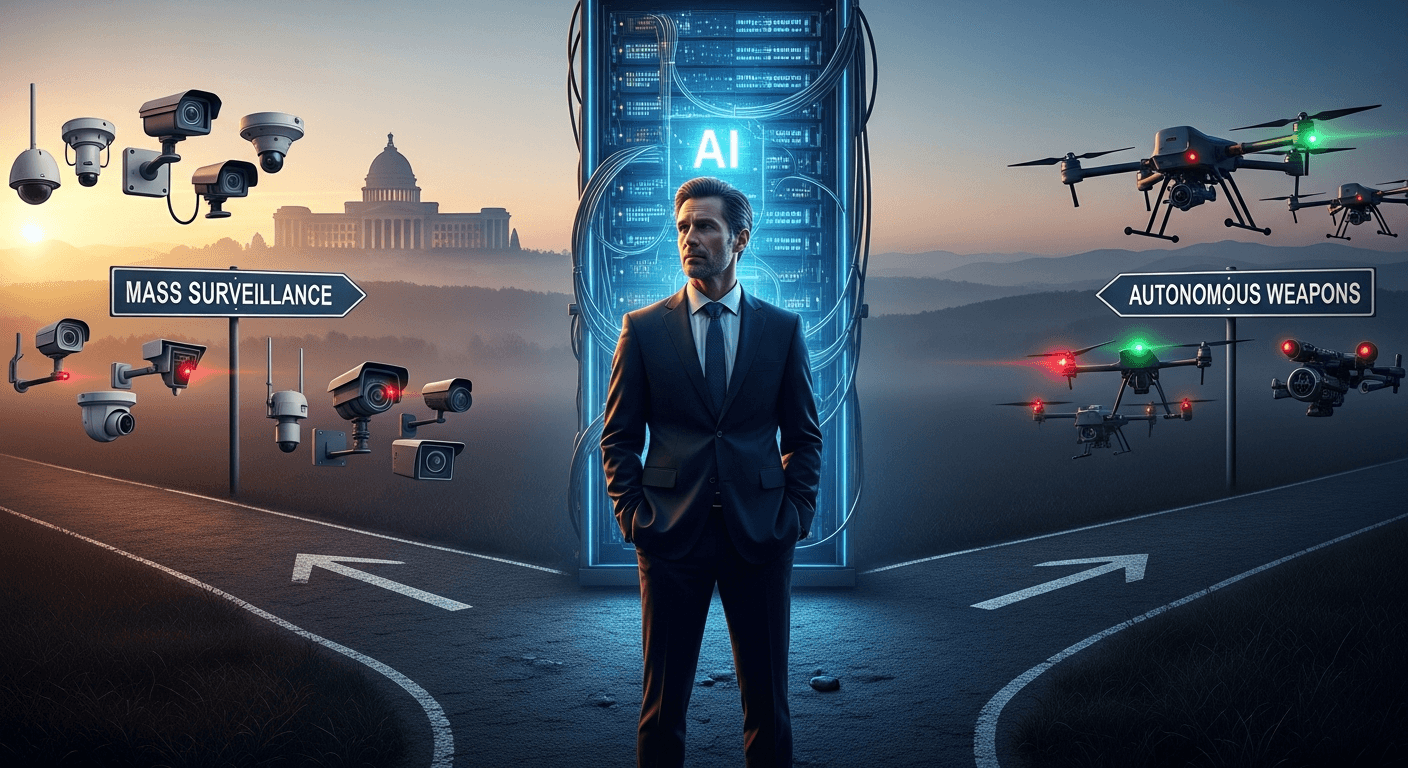

I watched closely as Dario Amodei (dario@anthropic.com), the CEO of Anthropic, drew a public red line: two specific uses of powerful AI that his company will not enable — mass domestic surveillance and fully autonomous weapons. He put that line where technology, law, and conscience meet, and the Department of Defense pushed back hard.

The facts are stark and well reported: Anthropic asked for written assurances that its model, Claude, would not be used for mass surveillance of Americans or to power weapons that remove humans from the decision loop. The Pentagon sought the ability to use the model for "all lawful purposes," and tensions escalated into threats of contract termination, supply-chain risk designations, and even the invocation of the Defense Production Act to compel access (CBS News, Politico).

Why this matters to me — and to any citizen

I build and think about technology, but I also think about the systems that govern it. This episode crystallizes several tensions:

- Ethics vs. utility: The Pentagon argues operational flexibility is essential for national security. Anthropic argues there are categorical uses they will not enable because of the societal harms those uses could produce.

- Contract language and legalese: The details often hide the power. Phrases like "for all lawful purposes" can be paired with clauses that allow safeguards to be overridden, effectively nullifying ethical commitments.

- Private companies as de facto policy-makers: When a handful of firms control the most capable models, they are forced to choose whether to be moral gatekeepers, reluctant suppliers, or something in between.

This is not just a corporate quarrel. It’s a moment when the architecture of democratic accountability — law, oversight, and public debate — has to catch up with what these systems enable.

Two specific technical and moral risks

I want to be explicit about the two red lines Anthropic set, because they highlight distinct failure modes:

- Mass domestic surveillance: AI can fuse disparate data sources at scale and infer associations, intentions, and vulnerabilities. Even if individual actions are lawful, scaled inference can chill dissent, entrench bias, and distort civic life.

- Fully autonomous weapons: Removing the human judgment loop from lethal decisions shifts responsibility and amplifies risk. AI systems are fallible, brittle in edge cases, and often inscrutable — conditions I do not want formalized into life-or-death military systems.

Both possibilities raise not only tactical risks but existential ethical questions about what a free society tolerates in the name of security.

What the standoff exposes about governance

This clash teaches a few lessons we should internalize:

- Narrow technical promises are not governance. Written contractual assurances matter, but they can be undone by broad catch-all clauses or emergency authorities.

- Democracy needs clearer rules. Relying on corporate norms or internal military policies alone will not be enough; we need statutory clarity from Congress and norms agreed at allied and multilateral levels.

- Transparency and independent audits are essential. If high-stakes models are used in defense, there must be independent verification, red-team testing, and clear chains of accountability.

Practical steps I think are reasonable

I’m pragmatic about national defense: societies must deter and defend. But pragmatism is not the same as unmoored expediency. Here are concrete moves worth pushing for now:

- Statutory guardrails: Congress should define and codify limits (and emergency procedures) for surveillance and weaponization of large models.

- Binding contractual language with audit rights: Contracts should include unambiguous prohibitions, independent oversight, and mechanisms to resolve disputes without improvised legalese.

- International norms: The U.S. should lead allied efforts to ban or tightly regulate fully autonomous weapons and mass domestic AI surveillance.

- Tech-government liaison offices: Long-term, a neutral technical office (with security clearances) could evaluate frontier models on behalf of government customers and make binding recommendations.

A final personal note

I respect the gravity of national defense. I also respect the moral clarity that Dario Amodei (dario@anthropic.com) expressed when he said some lines are not negotiable. Refusing certain customers or uses is costly; it is also a form of accountability.

We are living through a governance moment. How we resolve these public-private tensions will define not just the capabilities of our machines but the character of our institutions. If we fail to set durable rules now, the defaults will be set by contract templates, emergency powers, and technological momentum — not by democratic choice.

I want to see a path where innovation and democratic values reinforce each other. If that requires new laws, clearer contracts, and stronger oversight, then we should get to work.

Regards,

Hemen Parekh

Any questions / doubts / clarifications regarding this blog? Just ask (by typing or talking) my Virtual Avatar on the website embedded below. Then "Share" that to your friend on WhatsApp.

Get correct answer to any question asked by Shri Amitabh Bachchan on Kaun Banega Crorepati, faster than any contestant

Hello Candidates :

- For UPSC – IAS – IPS – IFS etc., exams, you must prepare to answer, essay type questions which test your General Knowledge / Sensitivity of current events

- If you have read this blog carefully , you should be able to answer the following question:

- Need help ? No problem . Following are two AI AGENTS where we have PRE-LOADED this question in their respective Question Boxes . All that you have to do is just click SUBMIT

- www.HemenParekh.ai { a SLM , powered by my own Digital Content of more than 50,000 + documents, written by me over past 60 years of my professional career }

- www.IndiaAGI.ai { a consortium of 3 LLMs which debate and deliver a CONSENSUS answer – and each gives its own answer as well ! }

- It is up to you to decide which answer is more comprehensive / nuanced ( For sheer amazement, click both SUBMIT buttons quickly, one after another ) Then share any answer with yourself / your friends ( using WhatsApp / Email ). Nothing stops you from submitting ( just copy / paste from your resource ), all those questions from last year’s UPSC exam paper as well !

- May be there are other online resources which too provide you answers to UPSC “ General Knowledge “ questions but only I provide you in 26 languages !

No comments:

Post a Comment