From intelligence that advises to one that acts: the rise of physical AI

I’ve been watching — and writing about — the arc of AI for years: from systems that answered questions and nudged decisions to machines that now put hands, wheels, and actuators into the messy world. This transition from virtual counsel to physical action is not a single invention but the intersection of long-running advances in robotics, perception, control, machine learning, and edge compute. In this post I want to place that trajectory in context, give concrete examples, and offer practical takeaways for technologists and policymakers.

A very short history: advice → embodiment

The first wave of applied AI gave us recommendation engines, chatbots, and decision-support tools. They sat inside servers and APIs — advising, not doing. The second wave grafted intelligence onto hardware. We moved from a model that says “you should” to systems that say “I will.” Commercial milestones illustrate the shift:

- Mobile manipulation and mobile robots in warehouses (the Kiva story and Amazon Robotics deployments) scaled logistics automation and changed operational design for fulfillment centers Amazon Robotics overview.

- Surgical robots like Intuitive’s da Vinci have extended human capability in operating rooms, enabling more precise minimally invasive procedures while retaining the surgeon-in-the-loop model Intuitive Surgical da Vinci.

- Autonomous vehicles moved from lab demos to robotaxis on public roads; companies publish safety-impact metrics from millions of autonomous miles to demonstrate real-world performance gains (see Waymo’s safety hub) Waymo Safety Impact.

- Boston Dynamics’ shift from viral research demos to commercial platforms (Spot, Stretch, Atlas) shows how dynamic mobility, manipulation, and fleet software are converging into field-ready robotic services Boston Dynamics Atlas and Spot examples.

Those developments make the point plainly: physical AI isn’t one product — it’s a systems problem solved across hardware, software, data, and operation.

Core technologies that made it real

- Robotics hardware: actuators, lightweight high-torque motors, compliant grippers, batteries and modular chassis that permit continuous operation outside the lab.

- Perception: multi-modal sensing (cameras, lidar, radar, depth, tactile sensors) combined with computer vision and sensor fusion to build situational awareness.

- Control and planning: classical control, model predictive control, and learned policies (RL, imitation learning) for locomotion, grasping, and manipulation.

- Machine learning: perception networks, policy learning, sim-to-real transfer techniques, and foundation models adapted for embodied tasks.

- Edge and distributed compute: on-device and nearby edge inference to meet low-latency constraints and preserve privacy while cloud and fleet-level computation handle heavy training and analytics.

These technologies are not independent. The magic is their orchestration: robust perception feeding real-time control, supervised by fleet orchestration at the edge and cloud.

Where physical AI is already reshaping the world

- Manufacturing and warehouses: automated picking, mobile racks, AMRs and robotic arms that raise throughput while reducing repetitive strain. Amazon’s deployments illustrate scale and integration with cloud/edge systems Amazon Robotics history.

- Healthcare: robotic-assisted surgery and AI-guided diagnostics expand clinical capabilities while requiring careful human oversight (da Vinci systems are a leading example) Intuitive Surgical.

- Logistics and last-mile: autonomous forklifts, sorting arms, and delivery robots streamline flows and reduce turnaround times.

- Home and service robots: vacuum robots are mainstream; more sophisticated home assistants and telepresence platforms are arriving slowly as perception and safety improve.

- Autonomous vehicles: robotaxis and delivery AVs are demonstrating safety and operational metrics at scale; public trials and deployments are already changing mobility patterns Waymo safety hub.

Ethical, safety and regulatory considerations

Physical AI changes the risk model: mistakes hurt people and property. Key considerations include:

- Alignment and intent: ensuring machines pursue goals that match human values and operational constraints.

- Robustness and verification: validating systems across edge cases and environments, using simulation and large-scale testing.

- Human oversight and fail-safe design: preserving meaningful human control and graceful degradation modes.

- Legal frameworks and liability: who is responsible when a robot acts autonomously — manufacturer, operator, or software provider? Regulators are scrambling to clarify liability, reporting, and certification processes.

- Transparency and auditability: logging decisions, sensor data, and model versions to enable post-incident analysis.

Policy must go beyond checklist regulation and enable standardized safety testing, data transparency for public analysis, and conditional deployment regimes (pilot → monitored scale → full operations).

Economic and social impacts

Physical AI will raise productivity but also create distributional challenges.

- Jobs: automation will displace some repetitive roles while creating demand for technicians, robot operators, simulation engineers, and data specialists.

- Productivity and costs: robots reduce cycle times and safety risks; incumbents that adopt them can unlock large efficiency gains.

- Inequality: regions and firms with access to capital and talent will capture disproportionate gains unless policy intervenes with retraining and transition programs.

My practical view: plan for transitions. Invest in reskilling, incentivize human-in-the-loop roles, and design complementary policies (portable benefits, apprenticeship pathways) so automation enhances broad-based prosperity.

Technical challenges and research directions

- Generalization: policies that work across diverse, unstructured environments remain an unsolved challenge.

- Sim-to-real bridging: domain randomization, latent space methods, and better simulators reduce real-world retraining costs; progress here scales deployments faster sim-to-real surveys and examples.

- Sample efficiency: learning with far fewer real-world trials using offline RL, model-based methods, and better transfer learning.

- Multi-agent coordination: fleets of robots need scalable communication, decentralized planning, and conflict resolution under uncertainty.

- Safety-oriented learning: integrating formal verification, safe RL, and runtime assurance so learned policies are certifiable for critical domains.

Each challenge is an opportunity for interdisciplinary teams combining controls, ML, HCI, and domain engineering.

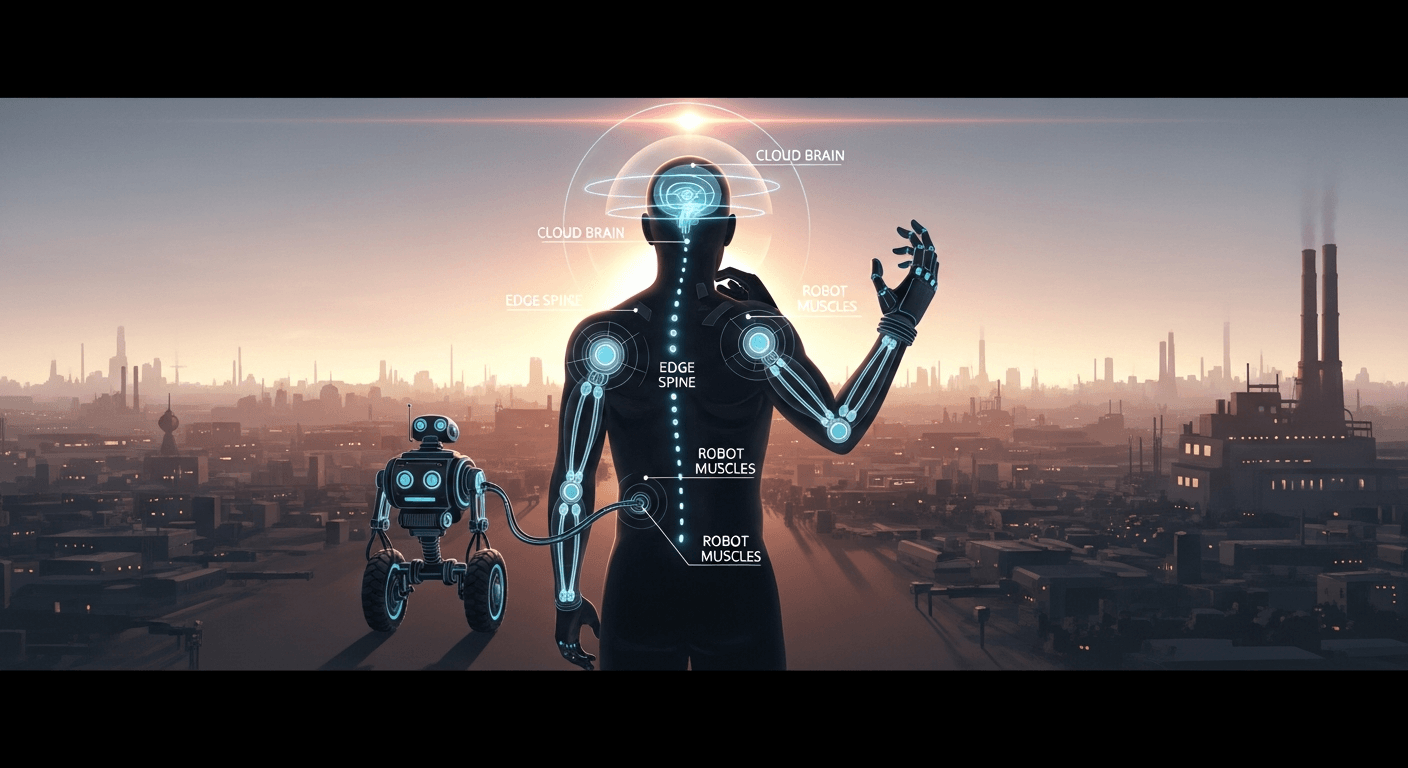

Metaphor: intelligence as a nervous system

Think of cloud AI as a brain, edge compute as the spinal cord, and the robot hardware as the muscles and senses. You need all three layers well-integrated to move reliably through the world. Weakness in any layer — brittle perception, slow nerves, or fragile muscles — breaks the system.

Actionable takeaways

For policymakers:

- Fund public testbeds and transparent benchmarking for safety-critical deployments.

- Create conditional permits for pilots that require public reporting of incidents and data for independent analysis.

- Invest in worker transition programs tied to emerging industry needs (robot maintenance, data operations, simulation engineering).

For technologists and leaders:

- Design for graceful failure and human override from day one.

- Prioritize sim-to-real pipelines and edge-enabled inference to accelerate adoption.

- Build cross-functional teams (controls + ML + systems + ops) and instrument deployments with rich logging and observability.

Looking ahead

Physical AI will not replace human judgment; it will reshape how we design work, cities, and care. The systems that thrive will be those that combine robust engineering, ethical guardrails, and clear operational practices. I have written about adjacent issues — the governance of conversational agents and principles for responsible systems in prior posts — and the lessons still apply: build with transparency, require human feedback loops, and design controls before capability. See my reflections on chatbot safeguards and the need for human-in-the-loop guardrails for continuity with these ideas earlier thoughts on chatbot safety and personal AIs.

I’m excited and cautious. The move from advising to acting is the most consequential chapter in AI’s history so far — it demands engineering rigor, regulatory imagination, and societal will.

Regards,

Hemen Parekh

Any questions / doubts / clarifications regarding this blog? Just ask (by typing or talking) my Virtual Avatar on the website embedded below. Then "Share" that to your friend on WhatsApp.

Get correct answer to any question asked by Shri Amitabh Bachchan on Kaun Banega Crorepati, faster than any contestant

Hello Candidates :

- For UPSC – IAS – IPS – IFS etc., exams, you must prepare to answer, essay type questions which test your General Knowledge / Sensitivity of current events

- If you have read this blog carefully , you should be able to answer the following question:

- Need help ? No problem . Following are two AI AGENTS where we have PRE-LOADED this question in their respective Question Boxes . All that you have to do is just click SUBMIT

- www.HemenParekh.ai { a SLM , powered by my own Digital Content of more than 50,000 + documents, written by me over past 60 years of my professional career }

- www.IndiaAGI.ai { a consortium of 3 LLMs which debate and deliver a CONSENSUS answer – and each gives its own answer as well ! }

- It is up to you to decide which answer is more comprehensive / nuanced ( For sheer amazement, click both SUBMIT buttons quickly, one after another ) Then share any answer with yourself / your friends ( using WhatsApp / Email ). Nothing stops you from submitting ( just copy / paste from your resource ), all those questions from last year’s UPSC exam paper as well !

- May be there are other online resources which too provide you answers to UPSC “ General Knowledge “ questions but only I provide you in 26 languages !

No comments:

Post a Comment