I watched the Anthropic–Pentagon saga unfold last week like a high-stakes chess game played at double speed. On one side was Dario Amodei dario@anthropic.com — the co‑founder and CEO of Anthropic, a lab that built Claude with an explicit safety-first ethos. On the other side was Sam Altman sama@openai.com — the public face of OpenAI, who announced a Pentagon agreement in a matter of hours and then spent the weekend defending it to staff and the market.

Context and quick primer

- Anthropic began as an offshoot of safety-focused research. Its Claude models were designed with explicit contractual and technical guardrails intended to limit military use for mass domestic surveillance and fully autonomous lethal systems.

- OpenAI, under Sam Altman sama@openai.com, has been less doctrinaire in public posture but has increasingly engaged with government and classified work while trying to preserve safety commitments.

The timeline (compressed)

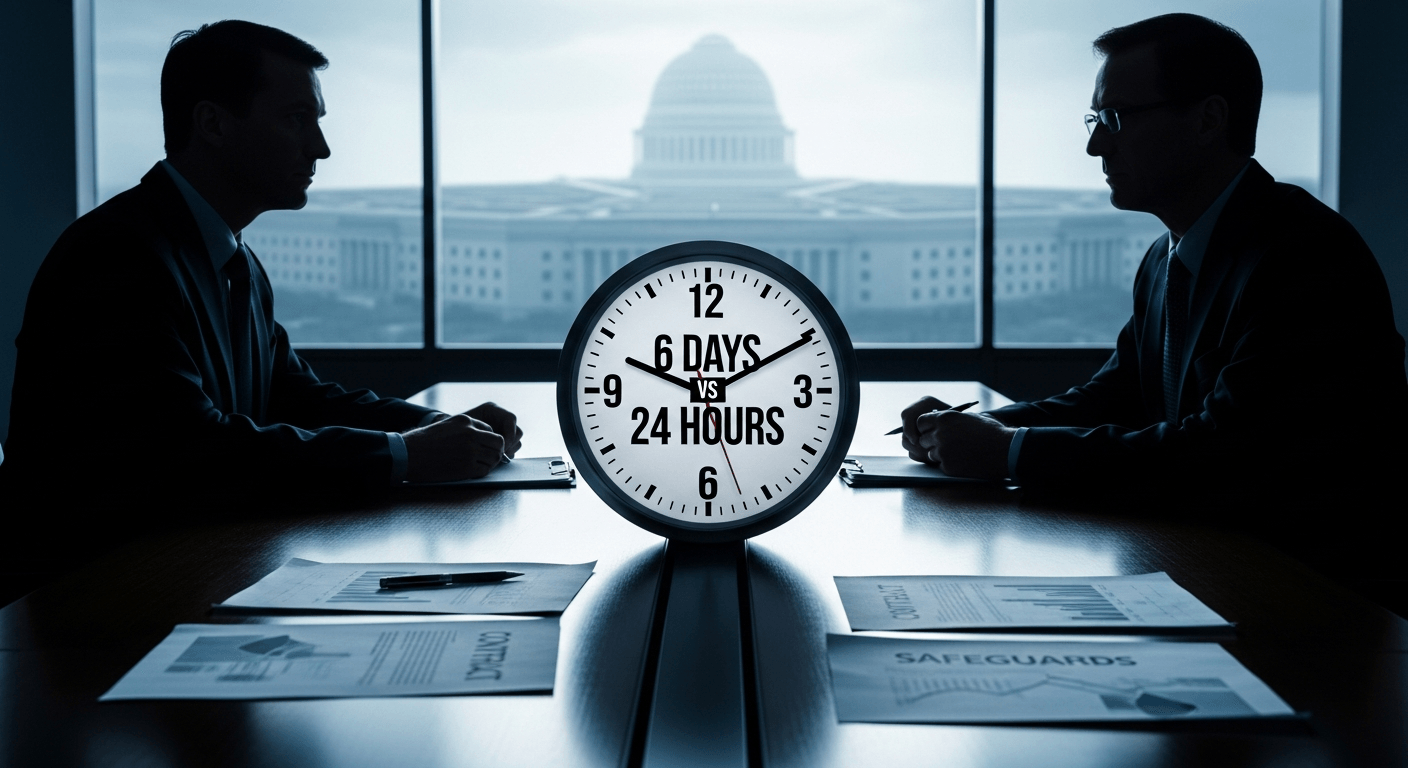

- February 24–27: Negotiations between Anthropic and the Pentagon unravel as the Department presses for broader "lawful use" language; Anthropic refuses to accept contract formulations that it says would allow mass domestic surveillance or fully autonomous weapons Fortune.

- February 27: In under 24 hours OpenAI announces it reached an agreement to allow deployment of its models on Pentagon classified networks; the news drops just hours after Anthropic’s public rejection and a government escalation Fortune, Business Insider.

- In the days that follow, Anthropic’s public statements and internal communications extend across several days, with its CEO signalling a slower internal reckoning and, later, apologising for some of the way the company communicated the dispute Times of India.

Why did it look like Dario Amodei dario@anthropic.com took six days to "realise" something that Sam Altman sama@openai.com closed in 24 hours? A few plausible factors:

- Different negotiation styles and leverage. Anthropic appears to have fought for explicit contractual redlines; OpenAI reportedly leaned on a mix of technical, legal, and operational assurances and moved aggressively to draft language the Pentagon accepted Axios.

- Messaging and PR readiness. OpenAI signalled internally and externally in a coordinated all‑hands and public post within a day; Anthropic’s communications were more reflexive and extended over several days, producing a perception of delay even while the company maintained it was negotiating in good faith Business Insider.

- Risk tolerance and calculus. Anthropic’s founders explicitly positioned the company around safety constraints that are not easily unbundled from technical deployments; moving faster may have felt like compromising core identity. OpenAI, flush with customer and funding momentum, may have judged the reputational cost of a rushed deal as acceptable to stabilise industry‑government relations TheStreet.

Implications for trust and government–AI relationships

This episode is a test case in three areas:

- Institutional trust: If companies are seen to flip or move urgently to placate government demands, other labs and the public will ask whether safety redlines are durable or negotiable under pressure.

- Contract architecture vs. technical safeguards: OpenAI’s approach — combining technical controls, legal terms, and rapid engagement — suggests governments may prefer practical, auditable mitigations over categorical contractual bans. That has consequences for who gets to set the standards.

- Precedent and power: A quick deal by one major supplier can be read as a lever by the government: accept these terms or risk losing federal business. That dynamic could incentivise convergence on lower‑friction suppliers and compress diversity in the AI ecosystem.

Expert readings and hypotheticals

Security analysts will say this is a classic coordination problem: governments need capable AI, vendors need market access, and society needs guardrails. If the Pentagon can obtain robust technical assurances and independent auditing, that reduces one axis of conflict; but contracts alone cannot address the political and normative questions about what constitutes ethical use.

Other analysts will note a competitive incentive: when one firm demonstrates it can meet government needs quickly, others face pressure to follow — either by matching safeguards or losing customers and influence.

My takeaways — and why I’ve been sounding this alarm before

I’ve written about operational guardrails and the need for reproducible safety measures in AI deployments before (see my earlier piece on Parekh’s approaches to chatbot safety)Parekh's Law of Chatbots. This episode reinforces two rhythms I keep coming back to:

- Speed is a feature of modern geopolitics; deliberation is expensive. That mismatch will keep producing ugly optics and moral dilemmas.

- Durable safety requires both technical mechanisms and public legitimacy. One without the other becomes brittle when pressure spikes.

If there’s a provocative conclusion here: firms and democracies cannot outsource the ethics of powerful tools to overnight deals. The question isn’t whether someone can do a 24‑hour contract — it’s whether those contracts withstand independent scrutiny, protect civil liberties, and preserve competitive pluralism in AI supply.

We should treat last week’s sprint as a wake‑up call: design for transparency and auditability today, because tomorrow’s emergency will ask for instant answers and we’ll regret the ones we gave without preparedness.

Regards,

Hemen Parekh

Any questions / doubts / clarifications regarding this blog? Just ask (by typing or talking) my Virtual Avatar on the website embedded below. Then "Share" that to your friend on WhatsApp.

Get correct answer to any question asked by Shri Amitabh Bachchan on Kaun Banega Crorepati, faster than any contestant

Hello Candidates :

- For UPSC – IAS – IPS – IFS etc., exams, you must prepare to answer, essay type questions which test your General Knowledge / Sensitivity of current events

- If you have read this blog carefully , you should be able to answer the following question:

- Need help ? No problem . Following are two AI AGENTS where we have PRE-LOADED this question in their respective Question Boxes . All that you have to do is just click SUBMIT

- www.HemenParekh.ai { a SLM , powered by my own Digital Content of more than 50,000 + documents, written by me over past 60 years of my professional career }

- www.IndiaAGI.ai { a consortium of 3 LLMs which debate and deliver a CONSENSUS answer – and each gives its own answer as well ! }

- It is up to you to decide which answer is more comprehensive / nuanced ( For sheer amazement, click both SUBMIT buttons quickly, one after another ) Then share any answer with yourself / your friends ( using WhatsApp / Email ). Nothing stops you from submitting ( just copy / paste from your resource ), all those questions from last year’s UPSC exam paper as well !

- May be there are other online resources which too provide you answers to UPSC “ General Knowledge “ questions but only I provide you in 26 languages !

No comments:

Post a Comment